\begin{equation}

\begin{aligned}

\frac{\partial E}{\partial h_{11}}&=\frac{\partial E}{\partial net_{o_{11}}} \cdot \frac{\partial net_{o_{11}}}{\partial h_{11}}+...\\

&+\frac{\partial E}{\partial net_{o_{33}}} \cdot \frac{\partial net_{o_{33}}}{\partial h_{11}}\\

&=\delta_{11} \cdot h_{11} +...+ \delta_{33} \cdot h_{11}

\end{aligned}

\end{equation}

推论出权重的梯度:

\begin{equation}

\begin{aligned}

\frac{\partial E}{\partial h_{i,j}} = \sum_m\sum_n\delta_{m,n}out_{o_{i+m,j+n}}

\end{aligned}

\end{equation}

误差项的梯度:

\begin{equation}

\begin{aligned}

\frac{\partial E}{\partial b} =\frac{\partial E}{\partial net_{o_{11}}} \frac{\partial net_{o_{11}}}{\partial w_b} +\frac{\partial E}{\partial net_{o_{12}}} \frac{\partial net_{o_{12}}}{\partial w_b}\\

+\frac{\partial E}{\partial net_{o_{21}}} \frac{\partial net_{o_{21}}}{\partial w_b} +\frac{\partial E}{\partial net_{o_{22}}} \frac{\partial net_{o_{22}}}{\partial w_b}\\

\end{aligned}

\end{equation}

可以看出,偏置项的偏导等于这一层所有误差敏感项之和。得到了权重和偏置项的梯度后,就可以根据梯度下降法更新权重和梯度了。

池化层的反向传播

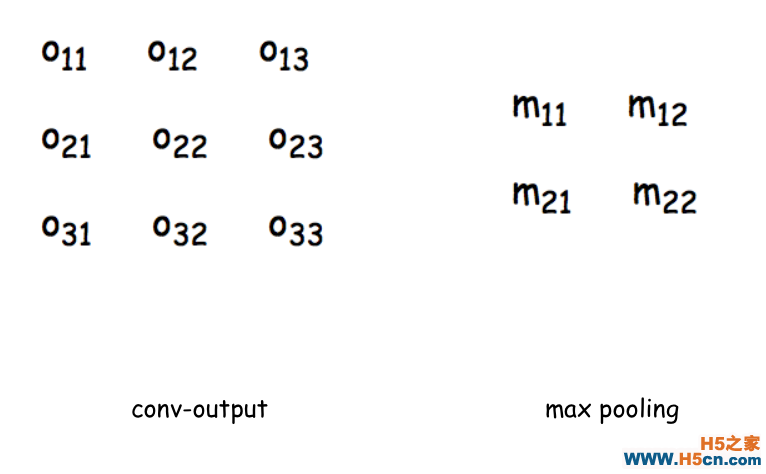

池化层的反向传播就比较好求了,看着下面的图,左边是上一层的输出,也就是卷积层的输出feature_map,右边是池化层的输入,还是先根据前向传播,把式子都写出来,方便计算:

假设上一层这个滑动窗口的最大值是$out_{o_{11}}$

\begin{equation}

\begin{aligned}

&\because net_{m_{11}} = max(out_{o_{11}},out_{o_{12}},out_{o_{21}},out_{o_{22}})\\

&\therefore \frac{\partial net_{m_{11}}}{\partial out_{o_{11}}} = 1\\

& \frac{\partial net_{m_{11}}}{\partial out_{o_{12}}}=\frac{\partial net_{m_{11}}}{\partial out_{o_{21}}}=\frac{\partial net_{m_{11}}}{\partial out_{o_{22}}} = 0\\

&\therefore \delta_{11}^{l-1} = \frac{\partial E}{\partial out_{o_{11}}} = \frac{\partial E}{\partial net_{m_{11}}} \cdot \frac{\partial net_{m_{11}}}{\partial out_{o_{11}}} =\delta_{11}^l\\

&\delta_{12}^{l-1} = \delta_{21}^{l-1} =\delta_{22}^{l-1} = 0

\end{aligned}

\end{equation}

这样就求出了池化层的误差敏感项矩阵。同理可以求出每个神经元的梯度并更新权重。

手写一个卷积神经网络

1.定义一个卷积层

首先我们通过ConvLayer来实现一个卷积层,定义卷积层的超参数

1 class ConvLayer(object): 参数含义: 4 input_width:输入图片尺寸――宽度 5 input_height:输入图片尺寸――长度 6 channel_number:通道数,彩色为3,灰色为1 7 filter_width:卷积核的宽 8 filter_height:卷积核的长 9 filter_number:卷积核数量 10 zero_padding:补零长度 11 stride:步长 12 activator:激活函数 13 learning_rate:学习率 (self, input_width, input_height, 16 channel_number, filter_width, 17 filter_height, filter_number, 18 zero_padding, stride, activator, 19 learning_rate): 20 self.input_width = input_width 21 self.input_height = input_height 22 self.channel_number = channel_number 23 self.filter_width = filter_width 24 self.filter_height = filter_height 25 self.filter_number = filter_number 26 self.zero_padding = zero_padding 27 self.stride = stride 28 self.output_width = \ 29 ConvLayer.calculate_output_size( 30 self.input_width, filter_width, zero_padding, 31 stride) 32 self.output_height = \ 33 ConvLayer.calculate_output_size( 34 self.input_height, filter_height, zero_padding, 35 stride) 36 self.output_array = np.zeros((self.filter_number, 37 self.output_height, self.output_width)) 38 self.filters = [] 39 for i in range(filter_number): 40 self.filters.append(Filter(filter_width, 41 filter_height, self.channel_number)) 42 self.activator = activator 43 self.learning_rate = learning_rate

其中calculate_output_size用来计算通过卷积运算后输出的feature_map大小

相关文章

相关文章

![[深度学习]实现一个博弈型的AI,从五子棋开始(1) - xerwin](/upload8/allimg/171115/1202222B9_lit.png)

精彩导读

精彩导读 热门资讯

热门资讯 关注我们

关注我们